Project

Agentic Execution Engine

Agentic Execution Engine is an architecture I designed for AI agents that execute multi-step plans without a workflow server. The agent follows the controller pattern — read desired state, read actual state, close the gap, terminate. Plans are human-readable documents, freely editable at any time, with stateless crash recovery via reconciliation.- Controller Pattern, Stateless Reconciliation, Event-Driven Architecture, Plan-as-Document

Conversation Segmentation for Multi-Tenant Agents

Conversation Segmentation is an architecture I designed for handling multi-tenant agent communication. In production agentic systems, a single AI agent receives interleaved messages from multiple sources — end users, orchestrator agents, and tool-calling agents. Without segmentation, contexts bleed across tenants. My approach classifies each incoming message by tenant identity and conversational context, routing it to an isolated thread with its own state — enabling true multi-tenant operation without cross-contamination.- Multi-Agent Systems, Context Isolation, A2A Protocol, Agentic AI Architecture

Agent Auth Proxy

Agent Auth Proxy is a unified authorization layer I designed for multi-agent systems. Calls come from two worlds — a user in a chat session, or an autonomous agent running headless. The proxy runs three steps: RBAC (skipped if no user), grant check (scoped allow/deny), and execute. Short-lived grants are user-scoped; long-lived grants are workspace-scoped so headless agents can use pre-authorized permissions.- Multi-Agent Auth, RBAC, Scoped Grants, Interactive Consent

AI Music Composer

AI Music Composer is a web application that generates original classical music from natural language descriptions. Describe a mood, style, or composer influence, and the AI composes music rendered as interactive sheet music with in-browser playback. Built with Claude API, Vercel serverless functions, and abcjs.- Claude API, JavaScript, Vercel Serverless, abcjs, Web Audio API

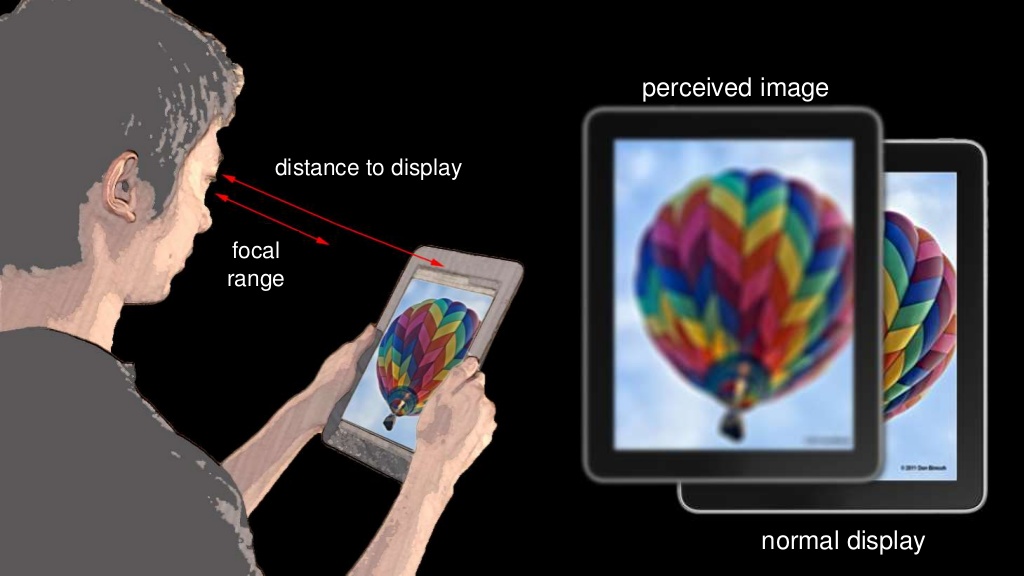

Vision Correcting Display

Vision Correcting Display is my capstone project at UC Berkeley. The purpose of the project is to design a digital display that appear clearly for people with visual problems. I am currently working on improving the method with a more precise eye model.

- Graphics, Vision Science

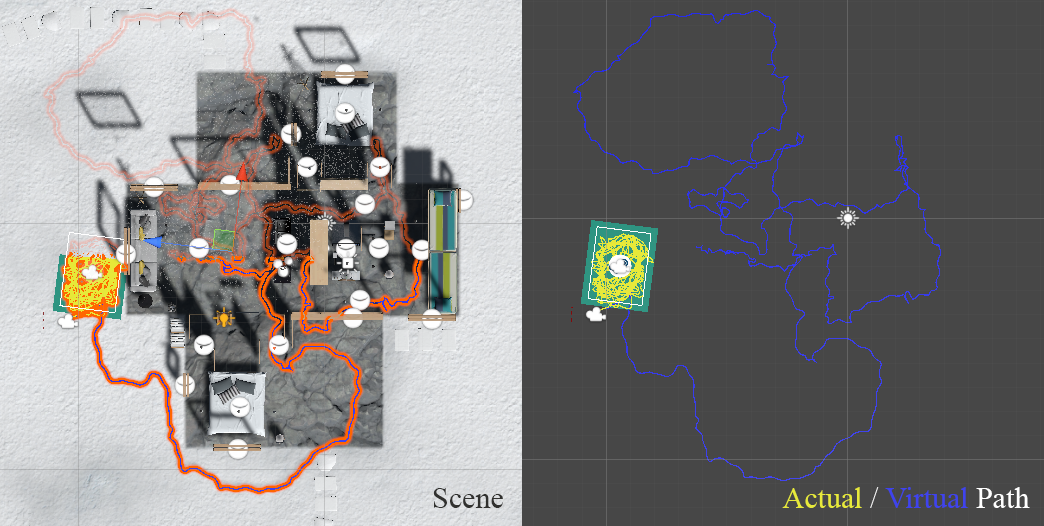

Infinite Walking in VR

Infinite Walking is a group project I did at UC Berkeley. The objective of this project is to design and develop a VR program that allows users to freely explore a virtual scene that is considerably larger than a room- scaled tracked space. My teammates and I propose a software system of redirected walking (RDW) techniques combined with a user interface (UI) to avoid user-obstacle collisions.- HCI, Unity, VR

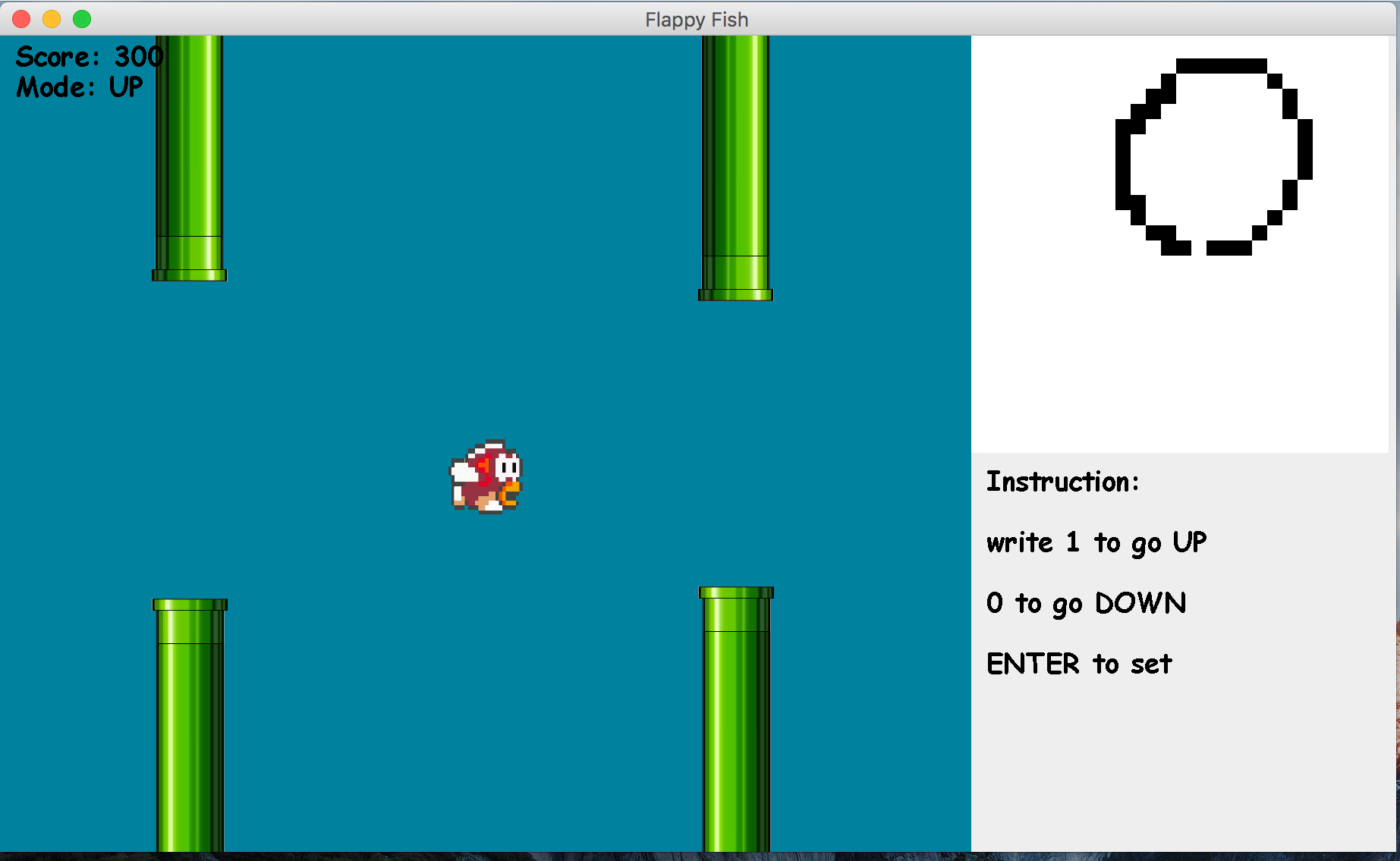

Flappy Fish

Flappy Fish is a game project I did as a special study at Smith College. I implement logistic regression and design the game based on MVC framework. The game takes handwriting as input and transform recognition results for interaction.- Game Design, Machine Learning, Java